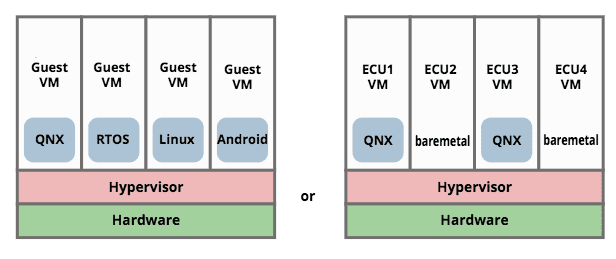

Many embedded/automotive vendors are recommending that electronic control unit (ECU) consolidation can be best achieved by adopting an architecture with a hypervisor. The idea is to isolate functions into guest operating system virtual machines and restrict access to sensitive resources. So examples of the consolidated architecture look something like:

We suggest that this approach is fundamentally incorrect, as follows:

- Any operating system that we trust to run safety critical processes on a multicore processor must be able to guarantee to schedule all resources so that safety critical processes get what they need, and are properly isolated from other processes. Without such guarantees, the operating system would not be fit for safety critical use in any case.

- If we have an operating system which provides trustable guarantees, we can rely on it to isolate and schedule multiple safety-critical processes for a single multicore processor. We don’t need additional separation. We either trust the OS or we don’t.

- Given that we are bound to have multiple boxes, we do not actually need an architecture that supports multiple OSes on the same physical machine. We could dedicate some boxes to Linux, and others to QNX, for example. Or port the missing functionality to the other OS (we’ll be porting anyway, since a lot of the functions are currently bare-metal).

- Multithreading operating systems are already designed to handle multiple applications. Moreover:

- Each guest requires its own copy of the operating system and system libraries. The guests are consuming memory multiple times for the same things. We do not have lots of spare memory to waste on copies of system software. This would only be justifiable if evidenced improvements in safety/reliability of the system can be shown to be worth the cost.

- Adding a hypervisor increases the amount of software in the system. More software means more bugs, more security vulnerabilities, and an increased attack surface. As one executive commented “How do they think they can close one door, by opening two?”

- Once we put a hypervisor underneath an OS, all guarantees that we had for the OS itself no longer apply. All bets are off. A critical bug or vulnerability in the hypervisor can definitely take down the system (the same is true for any Operating System, of course, and for the underlying hardware).

- More software means more things to update, which is more complicated, error-prone and higher risk, than updating a single software stack.

- The only fundamental justification for using a hypervisor is to support multiple different operating systems on a single CPU. Since we expect that each system/vehicle requires a set of domain controllers, it seems that we can avoid that situation altogether just by dedicating some controllers to run QNX, some to run Linux and so on.

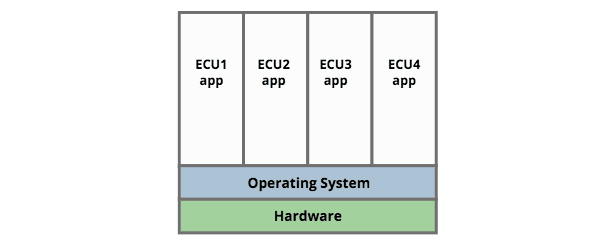

Consolidation Without Hypervisors

Choosing an operating system that handles scheduling and separation of multiple applications across available resources (memory and CPU cores), the overall consolidated approach is just:

Thus if we want to combine Infotainment, Cluster and HUD, the desired approach would be to combine all of these functions on a single unit running Linux, QNX or similar. Separation can be achieved in various ways (for example namespaces, Discretionary Access Control) depending on the OS, and the architect clearly needs to establish that the OS is fit for this purpose. For example in discussion around this document it was suggested that in critical scenarios the OS must perform as a Separation Kernel.

Discussions

There was a lot of useful discussion around the contents of this document which we distil down to two key points:

1) Improved security

"While adding the complexity of a hypervisor (or separation kernel) increases potential vulnerability attack surface, separation makes exploitation more difficult."

It’s not clear that there is any data/research about in-the-wild exploitations, to indicate whether the claimed increase in exploitation difficulty actually pays off. And as Geer’s Law states

Also, note that this approach does not mitigate against the various hardware-level vulnerabilities exposed in modern microprocessors (Rowhammer, Spectre, Meltdown etc).

2) Reduced costs and risks

The main justification for consolidation is to reduce engineering cost and risk because

- less boxes, less materials, less weight, less physical space, less wiring

- we can reuse the same code

- no need to revalidate the whole ECU when we make a change in one of the 'guests' (depending on implementation a 'guest' may be a stack with an OS, or a single executable)

However there are some clear additional costs and risks:

- direct costs of the 'hypervisor' or ‘separation kernel’ itself (including costs for licensing, support, porting, integration and validation)

- reduction in performance due to the hypervisor or separation kernel itself

- reduction in available memory (which can affect performance also) due to guest OS footprints

- increased complexity and risk for security updates (now we may need to update a hypervisor and/or multiple guest OS)

- another link in the 'chain of trust', resulting in an increase in the attack surface

- another vendor in the supply chain

- depending on the choice of hypervisor/separation-kernel, another binary blob where we have to trust the vendor for long-term support

- risk of missed issues due to the assumption of 'we don't need to re-validate'

- uncertainty leading to wrong implementation (will decision-makers safely distinguish between a dedicated minimal 'hypervisor' of 900 lines as described, a 'separation kernel', and some shiny 'product' based on KVM or similar?)

- risk that the 're-use' turns out to require 're-work' costs because the vendor contribution doesn't play nicely as a guest after all

- blame gaming between vendors when there are issues with shared functions such as power management, diagnostics, etc.

- risk that people assume 'hypervisor' solves all the problems, until results show that it didn't

- costs associated with recalls and/or accidents arising from failure to address the above

Related Content

- Podcast: 'The Road Ahead: Automotive Tech, Standards, and Safety for 2025' with John Ellis

- Blog: 'How Continuous Testing Helps OEMs Navigate UNECE R155/156'

Do you agree?

Send us your thoughts or comments via the form below.

Other Content

- FOSDEM 2026

- Building on STPA: How TSF and RAFIA can uncover misbehaviours in complex software integration

- Adding big‑endian support to CVA6 RISC‑V FPGA processor

- Bringing up a new distro for the CVA6 RISC‑V FPGA processor

- Externally verifying Linux deadline scheduling with reproducible embedded Rust

- Engineering Trust: Formulating Continuous Compliance for Open Source

- Why Renting Software Is a Dangerous Game

- Linux vs. QNX in Safety-Critical Systems: A Pragmatic View

- Is Rust ready for safety related applications?

- The open projects rethinking safety culture

- RISC-V Summit Europe 2025: What to Expect from Codethink

- Cyber Resilience Act (CRA): What You Need to Know

- Podcast: Embedded Insiders with John Ellis

- To boldly big-endian where no one has big-endianded before

- How Continuous Testing Helps OEMs Navigate UNECE R155/156

- Codethink’s Insights and Highlights from FOSDEM 2025

- CES 2025 Roundup: Codethink's Highlights from Las Vegas

- FOSDEM 2025: What to Expect from Codethink

- Codethink/Arm White Paper: Arm STLs at Runtime on Linux

- Speed Up Embedded Software Testing with QEMU

- Open Source Summit Europe (OSSEU) 2024

- Watch: Real-time Scheduling Fault Simulation

- Improving systemd’s integration testing infrastructure (part 2)

- Meet the Team: Laurence Urhegyi

- A new way to develop on Linux - Part II

- Shaping the future of GNOME: GUADEC 2024

- Developing a cryptographically secure bootloader for RISC-V in Rust

- Meet the Team: Philip Martin

- Improving systemd’s integration testing infrastructure (part 1)

- A new way to develop on Linux

- RISC-V Summit Europe 2024

- Safety Frontier: A Retrospective on ELISA

- Codethink sponsors Outreachy

- The Linux kernel is a CNA - so what?

- GNOME OS + systemd-sysupdate

- Codethink has achieved ISO 9001:2015 accreditation

- Outreachy internship: Improving end-to-end testing for GNOME

- Lessons learnt from building a distributed system in Rust

- FOSDEM 2024

- QAnvas and QAD: Streamlining UI Testing for Embedded Systems

- Outreachy: Supporting the open source community through mentorship programmes

- Using Git LFS and fast-import together

- Testing in a Box: Streamlining Embedded Systems Testing

- SDV Europe: What Codethink has planned

- How do Hardware Security Modules impact the automotive sector? The final blog in a three part discussion

- How do Hardware Security Modules impact the automotive sector? Part two of a three part discussion

- How do Hardware Security Modules impact the automotive sector? Part one of a three part discussion

- Automated Kernel Testing on RISC-V Hardware

- Automated end-to-end testing for Android Automotive on Hardware

- GUADEC 2023

- Embedded Open Source Summit 2023

- RISC-V: Exploring a Bug in Stack Unwinding

- Adding RISC-V Vector Cryptography Extension support to QEMU

- Introducing Our New Open-Source Tool: Quality Assurance Daemon

- Achieving Long-Term Maintainability with Open Source

- Full archive